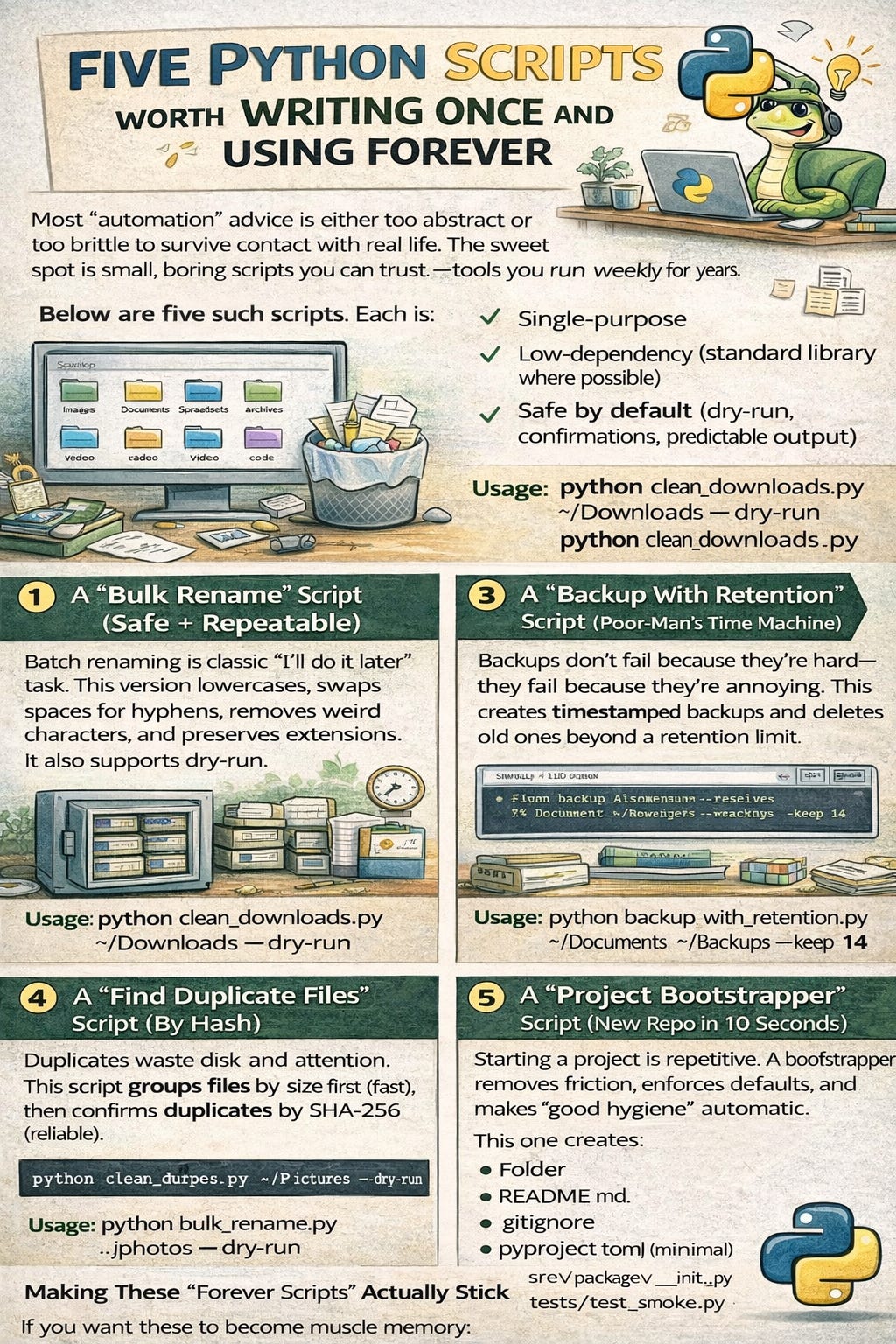

Five Python Scripts Worth Writing Once and Using Forever

Most “automation” advice is either too abstract or too brittle to survive contact with real life. The sweet spot is small, boring scripts you can trust—tools you run weekly for years.

Below are five such scripts. Each is:

Single-purpose

Low-dependency (standard library where possible)

Safe by default (dry-run, confirmations, predictable output)

1) A “Downloads Cleaner” That Files Messy Stuff Automatically

If you download a lot of PDFs, images, and archives, your Downloads folder becomes entropy. This script moves files into type-based folders and avoids overwriting.

#!/usr/bin/env python3

from __future__ import annotations

import argparse

import shutil

from pathlib import Path

from datetime import datetime

CATEGORIES = {

"images": {".png", ".jpg", ".jpeg", ".gif", ".webp", ".svg"},

"documents": {".pdf", ".docx", ".doc", ".txt", ".md", ".rtf"},

"spreadsheets": {".xlsx", ".xls", ".csv"},

"archives": {".zip", ".tar", ".gz", ".7z", ".rar"},

"audio": {".mp3", ".wav", ".m4a", ".flac"},

"video": {".mp4", ".mov", ".mkv", ".avi"},

"code": {".py", ".js", ".ts", ".java", ".go", ".rs", ".json", ".yaml", ".yml"},

}

def categorize(path: Path) -> str:

ext = path.suffix.lower()

for cat, exts in CATEGORIES.items():

if ext in exts:

return cat

return "other"

def unique_destination(dst: Path) -> Path:

if not dst.exists():

return dst

stem, suffix = dst.stem, dst.suffix

ts = datetime.now().strftime("%Y%m%d-%H%M%S")

return dst.with_name(f"{stem}-{ts}{suffix}")

def main() -> int:

p = argparse.ArgumentParser(description="Clean a folder by moving files into category subfolders.")

p.add_argument("folder", type=Path, help="Folder to clean (e.g., ~/Downloads)")

p.add_argument("--dry-run", action="store_true", help="Print actions without moving files")

args = p.parse_args()

folder: Path = args.folder.expanduser().resolve()

if not folder.is_dir():

raise SystemExit(f"Not a folder: {folder}")

for item in folder.iterdir():

if item.is_dir():

continue

cat = categorize(item)

target_dir = folder / cat

target_dir.mkdir(exist_ok=True)

dst = unique_destination(target_dir / item.name)

if args.dry_run:

print(f"[DRY] {item.name} -> {dst.relative_to(folder)}")

else:

shutil.move(str(item), str(dst))

print(f"[OK] {item.name} -> {dst.relative_to(folder)}")

return 0

if __name__ == "__main__":

raise SystemExit(main())

Usage

python clean_downloads.py ~/Downloads --dry-run

python clean_downloads.py ~/Downloads

2) A “Bulk Rename” Script (Safe + Repeatable)

Batch renaming is a classic “I’ll do it later” task. This version lowercases, swaps spaces for hyphens, removes weird characters, and preserves extensions. It also supports dry-run.

#!/usr/bin/env python3

from __future__ import annotations

import argparse

import re

from pathlib import Path

SAFE_RE = re.compile(r"[^a-z0-9._-]+")

def slugify(name: str) -> str:

name = name.strip().lower()

name = name.replace(" ", "-")

name = SAFE_RE.sub("-", name)

name = re.sub(r"-{2,}", "-", name).strip("-")

return name

def main() -> int:

p = argparse.ArgumentParser(description="Bulk rename files in a folder safely.")

p.add_argument("folder", type=Path)

p.add_argument("--dry-run", action="store_true")

args = p.parse_args()

folder = args.folder.expanduser().resolve()

if not folder.is_dir():

raise SystemExit(f"Not a folder: {folder}")

for f in sorted(folder.iterdir()):

if f.is_dir():

continue

new_name = slugify(f.stem) + f.suffix.lower()

if new_name == f.name:

continue

dst = f.with_name(new_name)

if dst.exists():

print(f"[SKIP] {f.name} -> {new_name} (exists)")

continue

if args.dry_run:

print(f"[DRY] {f.name} -> {new_name}")

else:

f.rename(dst)

print(f"[OK] {f.name} -> {new_name}")

return 0

if __name__ == "__main__":

raise SystemExit(main())

Usage

python bulk_rename.py ./photos --dry-run

python bulk_rename.py ./photos

3) A “Backup With Retention” Script (Poor-Man’s Time Machine)

Backups don’t fail because they’re hard—they fail because they’re annoying. This creates timestamped backups and deletes old ones beyond a retention limit.

#!/usr/bin/env python3

from __future__ import annotations

import argparse

import shutil

from pathlib import Path

from datetime import datetime

def main() -> int:

p = argparse.ArgumentParser(description="Create timestamped backups with retention.")

p.add_argument("source", type=Path, help="Folder to back up")

p.add_argument("dest_root", type=Path, help="Where to store backups")

p.add_argument("--keep", type=int, default=10, help="How many backups to keep")

args = p.parse_args()

src = args.source.expanduser().resolve()

dest_root = args.dest_root.expanduser().resolve()

if not src.is_dir():

raise SystemExit(f"Source not a folder: {src}")

dest_root.mkdir(parents=True, exist_ok=True)

stamp = datetime.now().strftime("%Y%m%d-%H%M%S")

backup_dir = dest_root / f"{src.name}-{stamp}"

print(f"[INFO] Backing up {src} -> {backup_dir}")

shutil.copytree(src, backup_dir)

backups = sorted([p for p in dest_root.iterdir() if p.is_dir() and p.name.startswith(src.name + "-")])

if len(backups) > args.keep:

to_delete = backups[: len(backups) - args.keep]

for b in to_delete:

print(f"[CLEAN] Removing old backup: {b}")

shutil.rmtree(b)

print("[OK] Backup complete.")

return 0

if __name__ == "__main__":

raise SystemExit(main())

Usage

python backup_with_retention.py ~/Documents ~/Backups --keep 14

If you want real incremental backups, look at

rsync/restic/borg. But this script wins on “will you actually use it.”

4) A “Find Duplicate Files” Script (By Hash)

Duplicates waste disk and attention. This script groups files by size first (fast), then confirms duplicates by SHA-256 (reliable).

#!/usr/bin/env python3

from __future__ import annotations

import argparse

import hashlib

from collections import defaultdict

from pathlib import Path

def sha256_file(path: Path, chunk_size: int = 1024 * 1024) -> str:

h = hashlib.sha256()

with path.open("rb") as f:

while True:

chunk = f.read(chunk_size)

if not chunk:

break

h.update(chunk)

return h.hexdigest()

def main() -> int:

p = argparse.ArgumentParser(description="Find duplicate files under a folder (by SHA-256).")

p.add_argument("folder", type=Path)

p.add_argument("--min-size", type=int, default=1, help="Minimum bytes to consider")

args = p.parse_args()

root = args.folder.expanduser().resolve()

if not root.is_dir():

raise SystemExit(f"Not a folder: {root}")

# 1) Group by size to reduce hashing work

by_size: dict[int, list[Path]] = defaultdict(list)

for path in root.rglob("*"):

if path.is_file():

size = path.stat().st_size

if size >= args.min_size:

by_size[size].append(path)

# 2) Hash only candidates

by_hash: dict[str, list[Path]] = defaultdict(list)

for size, paths in by_size.items():

if len(paths) < 2:

continue

for pth in paths:

try:

digest = sha256_file(pth)

except (OSError, PermissionError) as e:

print(f"[WARN] Skipping {pth}: {e}")

continue

by_hash[digest].append(pth)

# 3) Print duplicates

dup_groups = [paths for paths in by_hash.values() if len(paths) > 1]

if not dup_groups:

print("[OK] No duplicates found.")

return 0

print(f"[FOUND] {len(dup_groups)} duplicate groups:\n")

for i, paths in enumerate(sorted(dup_groups, key=len, reverse=True), start=1):

print(f"Group {i} ({len(paths)} files):")

for pth in paths:

print(f" - {pth}")

print()

return 0

if __name__ == "__main__":

raise SystemExit(main())

Usage

python find_dupes.py ~/Pictures --min-size 10240

5) A “Project Bootstrapper” Script (New Repo in 10 Seconds)

Starting a project is repetitive. A bootstrapper removes friction, enforces defaults, and makes “good hygiene” automatic.

This one creates:

Folder

README.md.gitignorepyproject.toml(minimal)src/<package>/__init__.pytests/test_smoke.py

#!/usr/bin/env python3

from __future__ import annotations

import argparse

from pathlib import Path

GITIGNORE = """__pycache__/

*.py[cod]

*.egg-info/

.dist/

.build/

.venv/

.env

.DS_Store

.pytest_cache/

.mypy_cache/

"""

PYPROJECT = """[build-system]

requires = ["setuptools>=68"]

build-backend = "setuptools.build_meta"

[project]

name = "{name}"

version = "0.1.0"

description = "Applied Code project"

readme = "README.md"

requires-python = ">=3.10"

dependencies = []

"""

README = """# {name}

Short description.

## Quickstart

```bash

python -m {pkg}

“”“

TEST_SMOKE = “”“def test_smoke():

assert 1 + 1 == 2

“”“

def main() -> int:

p = argparse.ArgumentParser(description=”Bootstrap a minimal Python project structure.”)

p.add_argument(”path”, type=Path, help=”Project directory to create”)

p.add_argument(”--package”, type=str, default=None, help=”Package name (defaults to folder name)”)

args = p.parse_args()

project_dir = args.path.expanduser().resolve()

name = project_dir.name

pkg = (args.package or name).replace("-", "_")

if project_dir.exists():

raise SystemExit(f"Path already exists: {project_dir}")

# Create structure

(project_dir / "src" / pkg).mkdir(parents=True)

(project_dir / "tests").mkdir(parents=True)

# Write files

(project_dir / ".gitignore").write_text(GITIGNORE, encoding="utf-8")

(project_dir / "README.md").write_text(README.format(name=name, pkg=pkg), encoding="utf-8")

(project_dir / "pyproject.toml").write_text(PYPROJECT.format(name=name), encoding="utf-8")

(project_dir / "src" / pkg / "__init__.py").write_text("", encoding="utf-8")

(project_dir / "tests" / "test_smoke.py").write_text(TEST_SMOKE, encoding="utf-8")

print(f"[OK] Created project: {project_dir}")

print(f" Package: {pkg}")

return 0

if name == “main“:

raise SystemExit(main())

**Usage**

```bash

python bootstrap_project.py ~/code/my-new-tool

Making These “Forever Scripts” Actually Stick

If you want these to become muscle memory:

Put them in one folder, e.g.

~/bin/pytools/Add that folder to PATH (or make shell aliases)

Always include

--dry-runfor anything destructivePrefer idempotent behavior (safe to run twice)

Example aliases:

alias clean-downloads="python ~/bin/pytools/clean_downloads.py ~/Downloads"

alias dupes="python ~/bin/pytools/find_dupes.py"